You find a winning campaign. ROAS is strong. You increase the budget. Performance holds for a few days - then drops. You pull the budget back down, but ROAS never quite recovers to where it was.

If this pattern is familiar, you're not alone. It's one of the most common problems Shopify stores face when scaling Meta ads. Most explanations focus on creative fatigue, audience saturation, or campaign structure. But there's a root cause that goes deeper - and it's one that most advertisers completely overlook.

What Actually Happens When You Scale

Understanding why ROAS drops when you scale requires understanding what Meta's algorithm is actually doing with your budget.

Audience saturation.

When your campaigns perform well, Meta is showing them to your highest-quality audience segments - the users whose behavior patterns most closely match your previous converters. As you increase budget, Meta exhausts those high-quality pockets faster. It then expands into broader, lower-quality segments. Conversion rates drop. CPA rises. ROAS falls.

Learning phase resets.

Large budget increases (more than 20–30% in a short window) can trigger a learning phase reset. Meta's algorithm has to re-learn how to allocate your increased budget effectively, which means a period of higher instability and typically weaker performance.

Signal degradation at scale.

At higher spend, you're reaching a larger and more diverse audience. More of the incoming traffic consists of lower-intent users. Those lower-quality interactions - clicks that don't convert, visits that don't progress through the funnel - enter your data pool and dilute the algorithm's model of what a good customer looks like. ROAS degrades further.

These are real dynamics. But addressing them with the standard playbook - gradual budget increases, horizontal scaling, creative refresh - only treats the symptoms. The root cause often runs deeper.

The Overlooked Root Cause: Data Quality

Here's the question most scaling guides don't ask: how complete is the conversion data Meta is receiving in the first place?

If your tracking setup is client-side only - Meta Pixel in the browser - you're likely losing 15–30% of your conversions before they ever reach Meta. Ad blockers intercept pixel events. iOS privacy settings limit attribution - with iOS 26 now expanding Link Tracking Protection to strip fbclid from URLs across more apps. Safari's cookie restrictions fragment customer journeys. And Google Consent Mode V2 enforcement (since July 2025) adds another consent-based data loss layer.

The consequence: Meta's algorithm is learning from an incomplete dataset. It doesn't know about the conversions it can't see. It can't fully understand what makes your best customers convert.

What this means at scale:

When your campaign is small and targeting narrow, defined audiences, the algorithm's partial data is sufficient. It finds the high-converting users within that limited pool even with incomplete signals.

But as you scale and expand reach, the algorithm needs to identify high-value users within a much larger, more diverse population. With incomplete training data, it's less equipped to do this accurately. It misidentifies potential customers, over-spends on low-intent audiences, and under-invests in the segments that would actually convert.

The ROAS crash isn't just about audience saturation. It's the algorithm hitting the limits of what it can do with incomplete data. Meta's Andromeda ad retrieval engine makes this dynamic even more pronounced - its deep neural networks rely on conversion signals to match ads to the right users in real time.

How Incomplete Data Sabotages Scaling

Think of Meta's algorithm as a machine that finds patterns. It looks at every conversion event it has received and asks: what behavior patterns predict a purchase? Then it finds users who match those patterns and bids to show them your ads.

When 20–30% of your purchases are invisible to the algorithm:

Your high-value audience model is smaller.

The algorithm builds its lookalike model from a subset of your actual buyers. It misses the purchasing patterns of ad blocker users, iOS users, and privacy-conscious browsers - who may have different demographic and behavioral characteristics than the buyers the algorithm can see.

Your audience expansion is less accurate.

As you scale and Meta expands beyond your core audience, it's using an imperfect model. It finds users who look like the buyers it can see - not necessarily the buyers you actually have.

Your optimization signals are weaker.

Meta's bidding decisions happen in real time at the impression level. Better conversion signals → more confident bidding → better placements at lower costs. Weaker signals → more conservative bidding → higher CPMs and less efficient spend at scale.

This is why the scaling crash happens faster than it should, and why pulling back the budget doesn't fully restore performance - the algorithm's model has been shaped by incomplete data, and it takes time to recalibrate even with better tracking in place.

The Standard Scaling Fixes - and Their Limits

Performance marketers have developed a standard playbook for scaling Meta ads:

Gradual budget increases.

Increasing budgets by 10–20% every 3–4 days instead of large jumps avoids learning phase resets and gives the algorithm time to adapt. This works - but it doesn't address the underlying data quality problem.

Horizontal scaling.

Duplicating ad sets or launching new campaigns with lookalike audiences or interest-based targeting to find new audience pockets. This can extend your reach, but lookalike quality depends on the quality of the source events. Lookalikes built from incomplete conversion data produce weaker results.

Creative refresh.

New hooks, formats, and visuals prevent ad fatigue and re-engage audiences. Essential - but creative quality has diminishing returns if your targeting is off due to poor data quality. For guidance on creating high-performing ad content, see our UGC framework for Meta and TikTok ads.

Campaign restructuring.

Consolidating ad sets, switching to Advantage+, or adjusting campaign objectives. These optimizations matter, but they operate on top of whatever data quality your tracking provides.

All of these tactics are valid. But none of them fix incomplete training data. They're optimizing the campaigns that run on top of broken tracking. You're tuning a race car with the wrong fuel.

How Better Data Changes the Scaling Equation

When Meta receives complete, enriched conversion data from every sale - including the 20–30% that client-side tracking misses - the algorithm's model improves across every dimension:

More accurate lookalike audiences.

Built from complete buyer data, not a subset. The model captures the full range of customer profiles who convert, including those who use ad blockers or iOS devices.

More confident bidding at scale.

Complete conversion signals allow the algorithm to bid more confidently even as audiences expand. It knows what a high-value user looks like across a wider population.

More stable performance.

When the algorithm has been trained on complete data, it makes better decisions during budget increases. Performance degrades less sharply as you scale.

Larger effective audience.

Complete event coverage means Meta has seen more actual converters. Lookalike audiences built from this data are both more accurate and, in some cases, larger.

Real result:

Petrol Industries implemented TrackBee's server-side tracking and saw their Meta ROAS double. The improvement came without changes to their ad strategy, creative, or bidding - purely from giving Meta's algorithm better data to work with.

For the technical details of how server-side tracking improves the data Meta receives: How to improve Meta's Event Match Quality score for better ad performance.

A Data-First Scaling Strategy

The right order of operations for scaling Meta ads:

Step 1: Audit your tracking.

Check Meta Events Manager. Compare your reported purchase count to your Shopify order count for the same period. The gap tells you how many conversions Meta is missing. If the gap is 15% or more, your data quality problem is limiting your scaling potential.

Step 2: Fix the data before scaling the budget.

Implement server-side tracking with deduplication before your next budget increase. Let the algorithm retrain on complete data for at least 2–4 weeks. You'll likely see ROAS improvements even before any budget changes.

Step 3: Scale gradually on clean data.

With server-side tracking in place, increase budgets by 15–25% every 3–5 days. The algorithm's stronger model handles expansion more effectively. You can scale further before hitting the audience quality cliff. At this stage, separating new from returning customers in your data becomes critical - see our guide on optimizing conversions for new customers.

Step 4: Monitor Event Match Quality.

Keep your Meta EMQ score at 7 or above. EMQ is a leading indicator of data quality - if it starts dropping, your tracking may have issues. Read more about optimizing Event Match Quality.

Step 5: Pair with creative refresh.

As you scale, refresh creative in sync with budget increases. Better data improves targeting; better creative improves conversion. The two together produce the strongest results.

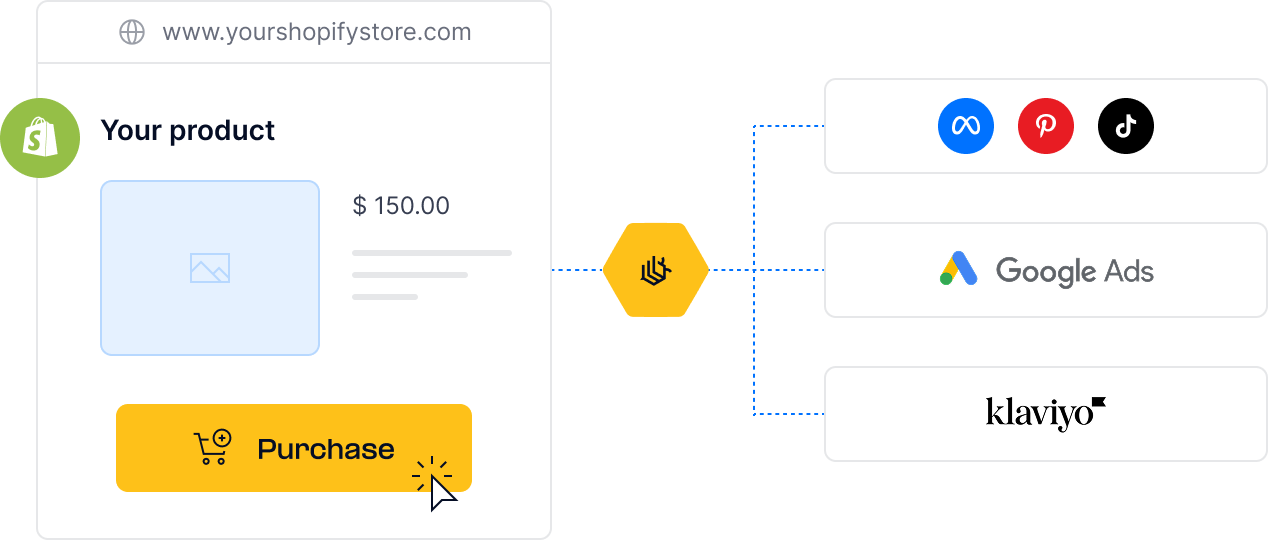

Step 6: Align data across all channels.

Consistent, enriched data flowing to Meta, Google, TikTok, and Klaviyo simultaneously means all your campaigns benefit from the same quality improvement. See: Why consistent data across all your ad channels is essential for performance.

Frequently Asked Questions

If I implement server-side tracking now, will it fix my ROAS immediately? Data improvements are immediate - TrackBee starts capturing and enriching events within 24–48 hours. Campaign performance improvements follow as Meta's algorithm retrains on the better data. Most stores see measurable ROAS improvements within 2–4 weeks.

Will switching to server-side tracking trigger a learning phase? Not necessarily. Adding server-side tracking doesn't change your campaign settings, which is the primary learning phase trigger. However, if your conversion volume changes significantly (due to deduplication correcting inflated numbers), the algorithm may enter a brief learning period.

At what budget level does data quality become critical for scaling? Data quality matters at any budget, but the impact amplifies as you scale. At lower spends with narrow audiences, the algorithm can succeed with partial data. As you expand audiences and increase budget, complete training data becomes increasingly critical for maintaining efficiency.

What's the relationship between Event Match Quality and scaling performance? EMQ is a direct proxy for how well Meta can match your conversion events to real users. Higher EMQ means better algorithm training and more accurate audience targeting - both of which directly improve scaling performance. Target EMQ of 7+ before significant budget increases.

.png)